Manage your servers with natural language.

By RCDevs S.A. · 15 years in cybersecurityAsk your preferred AI — ManageLM agents execute on your Linux and Windows servers using a secure and privately-hosted LLM service. No SSH. No scripts. No risk. Just fun!

Built on trusted foundations

The future of IT management.

No more rigid dashboards and scripts. The next era of infrastructure is conversational, intelligent, and secure by design.

Talk to your infrastructure

The future is natural conversations with your IT systems — voice or prompts, no more hard-coded interfaces. Just talk to an AI that distributes tasks to a fleet of autonomous sysop agents, each with their own intelligence and specialized skills.

Local + Cloud AI, the right way

One of the only platforms that combines local and cloud AI models correctly. Local agents handle routine jobs with full confidentiality — your data never leaves the server. Cloud AI provides the reasoning power when you need it.

Three layers. Zero complexity.

From natural language to server execution in seconds — with every command validated and constrained.

Talk to Claude in plain English

Use the Claude app — the same AI you already know — to describe what you need. "Restart the app", "Check logs", "Update packages on all staging servers".

Portal authenticates & routes

The ManageLM portal verifies your identity via OAuth 2.0, checks permissions, identifies the target agent, and dispatches the task over a secure WebSocket channel.

Agent executes with a local LLM

The lightweight agent uses Ollama (or any compatible LLM) in your infrastructure to interpret the task, generates commands, validates each one against the skill's allowlist, and executes. A single LLM server can serve all your agents. Sensitive data never leaves your network.

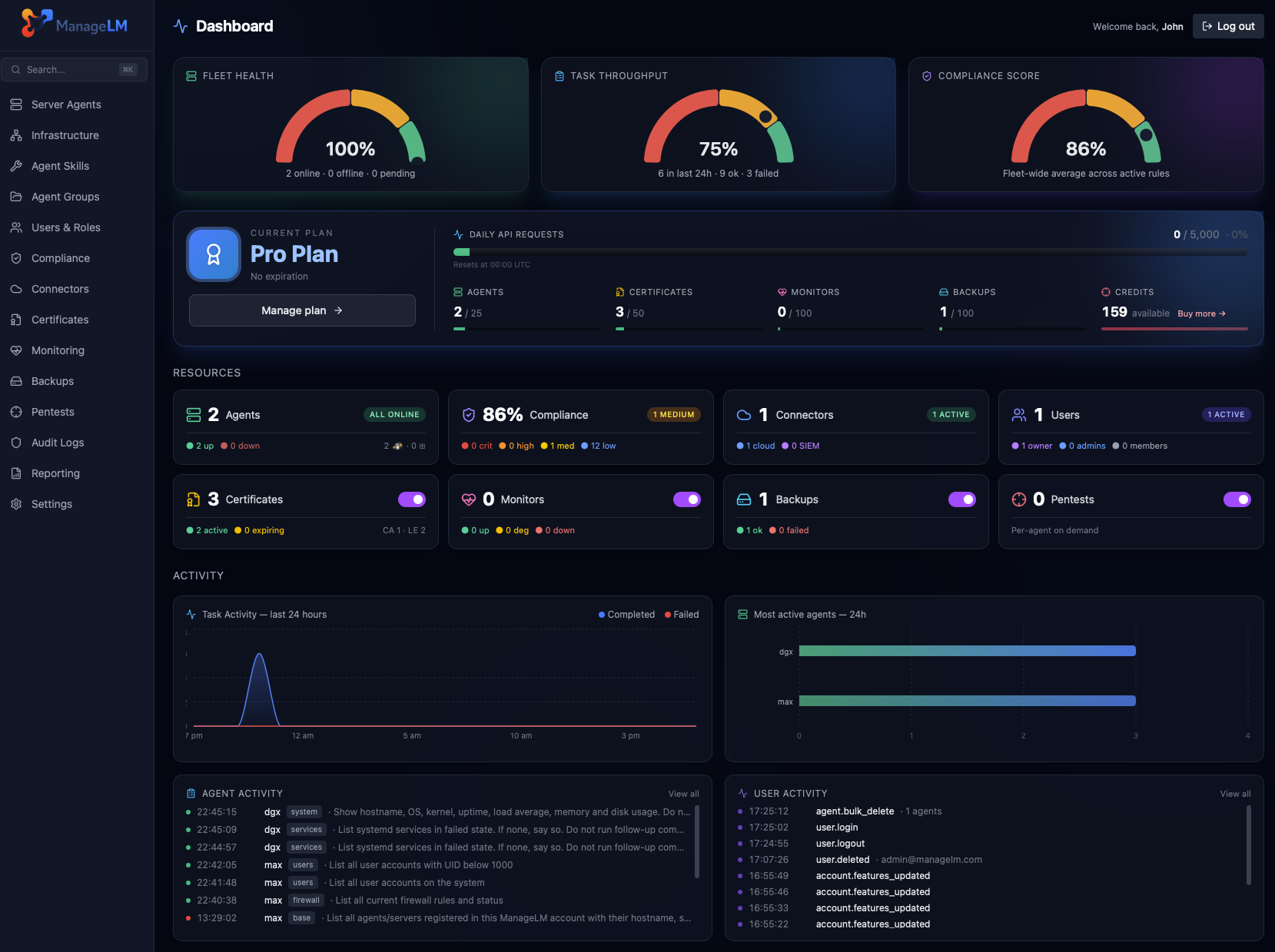

See it in action.

A clean, dark interface built for sysadmins who need clarity, speed, and full control.

Security isn't a feature.

It's the architecture.

Every layer prevents unauthorized actions — even if the LLM hallucinates or faces prompt injection.

Command Allowlisting

Skills define explicit permitted commands. Every AI-generated command is validated in code. Anything outside is blocked.

Local LLM — Data Stays In Your Network

Task interpretation runs via a local Ollama instance shared across your infrastructure. Passwords, configs, logs — nothing leaves your network.

Read-Only by Default

Agents with no allowed_commands can only run read-only operations. Write access requires explicit config.

Zero Inbound Ports

Agents connect outward via WebSocket. Your servers never expose a port. No SSH, no VPN, no attack surface.

Secrets Hidden from AI

Secrets are env vars. The LLM only sees $VAR_NAME — actual values injected at execution time.

Ed25519 Signed Messages

Every portal-to-agent message is cryptographically signed. Agents reject unsigned or tampered messages — no command can be injected via the WebSocket.

Change Tracking & Revert

Every mutating task is git-snapshotted before and after. See exactly what changed, get the full diff, and one-click revert any task within 30 days.

Kernel Sandbox (Landlock + seccomp)

Opt-in kernel confinement (Linux). Landlock restricts filesystem writes to allowed paths. seccomp-bpf blocks dangerous syscalls like mount and reboot. Even if a command passes all other checks, the kernel stops it.

✓ LLM is untrusted by design

The AI generates commands, but every command is validated in code before execution. Prompt injection or hallucinations are blocked.

↻ Execution limits per task

Max 10 turns · 120s timeout · 8KB output cap. Every operation logged in a full audit trail.

31 skills. 230+ operations.

From systemd to Kubernetes, databases to VPNs — every skill is security-scoped with exact command allowlists.

Security, monitoring, PKI & backups.

Built in. One click.

Automated security scanning, full service discovery, SSH & sudo access mapping, and user activity auditing on every server — no skills required, no prompting. Just actionable results.

Security Audit

One-click security scan across 18 checks — SSH hardening, firewall rules, TLS ciphers, certificate expiry, SUID binaries, failed logins, Docker exposure, and more. AI-analyzed findings with severity ratings and actionable remediation.

- ● Public vs. private server context

- ● One-click automated remediation

- ● PDF security report export

- ● CVE scanning with actively-exploited alerts

Pentests

Automated penetration testing from the attacker's perspective. Scans your public servers with nmap, nuclei, and testssl.sh. AI-generated reports with findings, severity scores, and compliance mapping.

- ● 9 security tests (ports, vulns, TLS, web, DNS)

- ● Self-scan enforcement & domain verification

- ● Results feed into compliance frameworks

System Inventory

Discover every running service, installed package, container, database, and user account on your servers. AI-structured into categorized entries with automatic version detection.

- ● 12 service categories

- ● Automatic package version extraction

- ● Fleet-wide PDF inventory export

SSH & Sudo Access

Map every SSH key and sudo privilege across your infrastructure. Fingerprints are matched against team profiles for identity resolution — know exactly who has access to what, and manage it in plain English.

- ● Automatic identity mapping

- ● NOPASSWD sudo detection

- ● Fleet-wide PDF access report

Activity Audit

Track who logged in, what they did with sudo, which config files changed, and what packages were installed — from standard Linux logs or Windows Event Log. Identity mapping links system users to your ManageLM team.

- ● No auditd — works on any Linux or Windows

- ● Team identity mapping via GECOS

- ● Fleet-wide PDF activity report

Service Monitoring

Track availability and response time of 46 services across your fleet — databases, web servers, message brokers, VPNs, and more. Up/down transitions trigger email, webhook and in-app alerts, with hourly response-time rollups for trend analysis.

- ● 46 service types (TCP, HTTP, ICMP)

- ● Email, webhook & in-app alerts

- ● Response time history & rollups

Certificate Management

Issue, renew, and revoke TLS certificates from a built-in internal CA or Let's Encrypt. Certificates are deployed directly to agents over WebSocket with automatic service reload. Daily auto-renewal sweep with CRL generation.

- ● Internal CA + Let's Encrypt ACME

- ● Auto-renewal & CRL generation

- ● Private keys never stored on portal

System Backups

End-to-end encrypted filesystem backups to your own S3 storage (OVH, AWS, R2, B2, Wasabi, Scaleway, MinIO). Agent-side AES-256 encryption means ManageLM never sees your data. Streaming decrypt downloads and restore-to-any-agent.

- ● 7 S3-compatible providers supported

- ● AES-256-CBC + HMAC, keys per backup

- ● Service quiesce for consistent DB snapshots

Map your security posture

to industry standards.

Automatically evaluate your fleet against CIS, SOC 2, PCI DSS, ISO 27001, NIS2, NIST CSF, and HIPAA. Detect drift, track progress, and generate auditor-ready evidence PDFs — all from the same security scans.

8 Frameworks

CIS Level 1, CIS Docker, SOC 2, PCI DSS, ISO 27001, NIS2, NIST CSF, and HIPAA — all evaluated automatically against your fleet's security scan results. Add custom frameworks with a single JSON file.

Drift Detection

When a rule that previously passed starts failing, ManageLM detects it instantly. In-app alerts and optional email notifications so your team catches regressions before auditors do.

Evidence PDFs

Generate per-framework audit evidence documents with control-by-control status, technical check results, per-server findings with raw command output, remediation guidance, and a full infrastructure inventory.

One platform, many ways in.

ManageLM is not tied to a single tool. Use it from Claude, ChatGPT, your terminal, the web portal, VS Code, Slack, or n8n — pick what fits your workflow.

| Scenario | Claude MCP | ChatGPT | Shell | Portal | VS Code | Slack | n8n |

|---|---|---|---|---|---|---|---|

| Natural language tasks | ✓ | ✓ | ✓ | ✓ | ✓ | Slash cmds | Structured |

| Multi-step reasoning | ✓ Best | ✓ | — | — | ✓ | — | Workflows |

| Scheduled & automated tasks | Via portal | Via portal | ✓ Cron | ✓ Built-in | — | Webhooks | ✓ Native |

| Security audits & reports | ✓ | ✓ | ✓ | ✓ | — | ✓ | |

| Fleet operations | ✓ | ✓ | ✓ | ✓ Bulk select | ✓ | ✓ | ✓ |

| CI/CD & scripting | — | — | ✓ Best | ✓ API | — | Alerts | ✓ Best |

| Team collaboration | Per user | Per user | Per user | ✓ RBAC + audit | Per user | ✓ Shared channels | ✓ Shared |

| Offline / air-gapped | ✗ | ✗ | ✓ | ✓ Self-hosted | ✗ | ✗ | ✓ Self-hosted |

| Best for | Complex tasks & exploration | Alternative LLM ecosystem | Scripting, cron & automation | Dashboards, RBAC & reports | Dev workflow in editor | Team alerts & approvals | Automation pipelines |

Not just another management tool.

The only platform combining AI automation with hard-enforced security.

| Capability | ManageLM | SSH + Scripts | Ansible / Puppet | Generic AI |

|---|---|---|---|---|

| Natural language interface | ✓ | ✗ | ✗ | ✓ |

| Command allowlisting (hard-enforced) | ✓ In code | ✗ | ~ Limited | ✗ |

| Private LLM (data in your network) | ✓ | N/A | N/A | ✗ Cloud only |

| Zero inbound ports | ✓ | ✗ Port 22 | ✗ SSH | ~ Varies |

| No learning curve | ✓ Just talk | ✗ Bash | ✗ YAML | ✓ |

| Skill-scoped security | ✓ | ✗ Full access | ~ Roles | ✗ |

| Kernel sandbox (Landlock/seccomp) | ✓ | ✗ | ✗ | ✗ |

| Full audit trail | ✓ | ~ Manual | ✓ | ✗ |

| Multi-tenant RBAC | ✓ | ✗ | ~ Limited | ✗ |

| Built-in security audits | ✓ + remediation | ✗ | ✗ | ✗ |

| Compliance frameworks & evidence PDFs | ✓ 8 frameworks | ✗ | ✗ | ✗ |

| Automated penetration testing | ✓ 9 tests | ✗ | ✗ | ✗ |

| Service monitoring & alerts | ✓ 41 services | ✗ | ✗ | ✗ |

| Cloud infrastructure connectors | ✓ | ✗ | ✗ | ✗ |

| Certificate management & PKI | ✓ CA + LE | ✗ | ✗ | ✗ |

| Encrypted S3 backups & restore | ✓ AES-256 client-side | ✗ | ✗ | ✗ |

100% free. No catches.

Every feature, every integration, every skill — completely free for up to 10 agents. No credit card. No time limit.

- All 31 built-in skills

- 230+ operations

- Multi-tenant teams & RBAC

- Server groups

- Scheduled tasks

- Webhooks & API keys

- Full audit trail

- Passkeys & MFA

- Trial LLM included

- Local LLM support

- Security audits & inventory

- Service monitoring & alerts

No credit card required · No feature gates · Full platform access

Need more agents?

Scale beyond 10 agents with flexible plans for growing teams and enterprises.

- Unlimited agents

- Enterprise-grade Pentests

- Priority support

- Custom onboarding

- Volume discounts

Deploy your way.

Start with our managed cloud in seconds, or self-host on your own infrastructure with Docker.

ManageLM Cloud

Managed SaaS — start in minutes

- Free for up to 10 agents

- Fully managed infrastructure

- Automatic updates

- Trial LLM included

ManageLM Self-Hosted

Run on your infrastructure

- Full data sovereignty

- Docker Compose deployment

- Proxied LLM — centralized API keys

- No external dependencies

Everything you need at scale.

Multi-Tenant Teams

Owner, admin, member roles with granular permissions. Invite teammates, scope access per server or group.

Server Groups

Organize agents into groups. Run operations across entire groups with a single request.

Scheduled Tasks

Cron-based schedules for backups, log rotation, health checks — all automated.

Webhooks & API Keys

Real-time notifications on events. Full REST API for integration into existing workflows.

Full Audit Trail

Every action logged with timestamps, IPs, and full context. Complete accountability.

Passkeys & MFA

WebAuthn/FIDO2 passwordless login. Multi-factor auth and IP whitelisting for MCP.

Get in touch.

Questions, demos, or enterprise needs? We'd love to hear from you.

Documentation

Response Time

We typically respond within 24 hours on business days.

Email client opened!

Please send the email from your mail application to complete the message.