Encrypted backups to your own S3.

Agent-side AES-256 encryption means ManageLM never sees your data — only ciphertext transits to your bucket. Service-quiesce for consistent database snapshots. Streaming restore-to-any-agent.

On this page

Overview

ManageLM backups follow one strict design principle: your data never touches ManageLM infrastructure. Every archive is encrypted on the agent with a per-backup key, uploaded directly from the agent to your S3 bucket, and decrypted only when you — or another agent you pick — restore it.

Pick an S3 provider once in Settings. From then on, backups are just a schedule, a source path, and a retention count. The agent tars, encrypts, streams to S3, and reports back. No data ever flows through the portal.

Zero-knowledge architecture. ManageLM holds only the wrapped master key and metadata. Even a full portal database compromise would produce only encrypted archives at rest — useless without the corresponding master keys, which are delivered to the agent on-demand over the existing mTLS channel.

Supported S3 providers

Provider-agnostic — one set of credentials, any S3-compatible storage. Each provider has a one-click preset that fills in the endpoint URL, and the Test button validates credentials with a HeadBucket before saving.

- OVH Object Storage

- Amazon S3

- Cloudflare R2

- Backblaze B2

- Wasabi

- Scaleway Object Storage

- MinIO (self-hosted)

Secret keys are stored AES-256-GCM encrypted at rest on the portal. Cloudflare R2 is a popular pick for its zero-egress pricing; OVH Object Storage is preferred for EU-based data residency; MinIO fits fully self-hosted deployments.

End-to-end encryption

Every backup has its own 32-byte random master key, stored wrapped server-side. Before each run, the portal pushes the key to the agent over the existing mTLS WebSocket — never over HTTP, never logged.

AES-256-CBC + HMAC-SHA256

Industry-standard encrypt-then-MAC pattern. Ciphertext integrity is verified before a single byte is written to disk during restore.

Domain-separated subkeys

Encryption key = SHA256(master ‖ "enc"), MAC key = SHA256(master ‖ "mac") — no key reuse across operations.

Wire format

IV (16) ‖ ciphertext ‖ HMAC tag (32). Streamable: the agent doesn't buffer the archive in flight for transport.

Fail-closed restore

HMAC mismatch at end-of-file destroys the download stream — the browser sees a broken file instead of partial, corrupt bytes.

The agent implementation is pure-Python via oscrypto — no cryptography package, no native build dependencies, works on every distro the agent supports.

Service quiesce for consistent snapshots

Backing up a live database directory usually produces a broken snapshot — the file is captured mid-transaction. ManageLM solves this with a simple service quiesce list: name the services you want stopped before the backup, and the agent:

- Stops each listed service via

systemctl stop(Linux) ornet stop(Windows), with a 30-second timeout per service. - Runs the tar → encrypt → upload pipeline against a now-consistent filesystem.

- Restarts every service that was successfully stopped — inside a

try / finallyso a backup failure (or even the agent being killed mid-run) never leaves services down.

Always returns services to running. The restart step is wrapped in a finally block, so whatever happens during the tar-and-upload stage — OOM kill, disk full, S3 credentials rotated — the quiesced services are always brought back up.

Restore & portability

Backups are portable — they can be restored to the original agent or any other online agent. This is what makes the system useful beyond disaster recovery: it's also a fleet-wide file transfer tool.

- Download decrypted

.tar.gz— mints a one-shot Redis token (5 min TTL), then streams the archive through a rolling-window HMAC/decrypt pipeline straight to the browser. Save dialog opens immediately; progress bar fills as the data arrives. - Restore to any agent — pick a target agent and path. The agent downloads, verifies HMAC before touching disk, strips the top-level directory, and extracts with a path-traversal guard.

- Abort running backups — cancel a stuck upload and reclaim the snapshot slot.

- Detach on delete — when you delete an agent, its backups are not deleted. The

agent_idis cleared instead. A Reassign button lets you attach the backup to a replacement agent later.

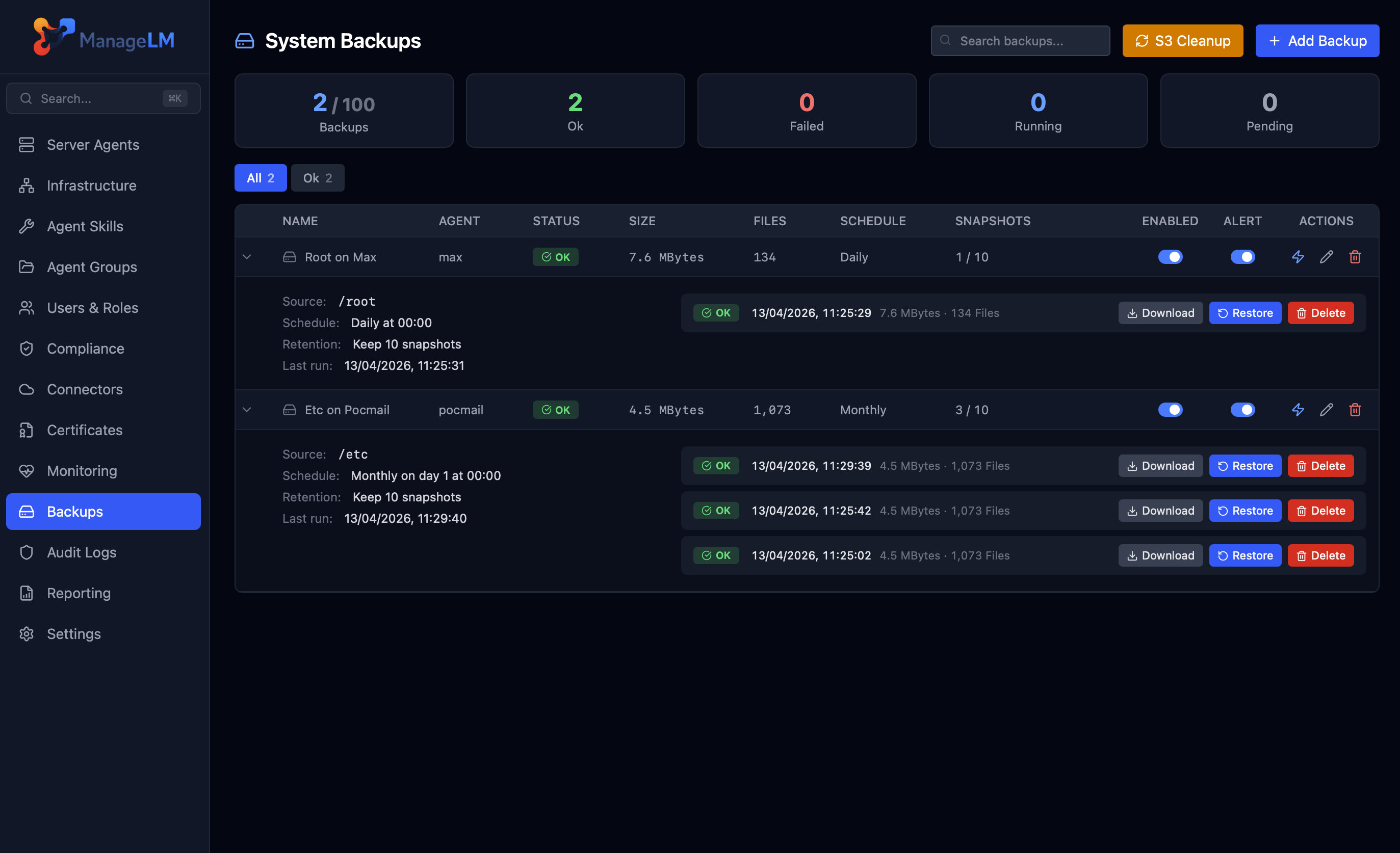

Schedule & retention

Each backup has its own cadence and retention policy:

| Schedule | Configurable Fields |

|---|---|

| Every hour | — |

| Every 6 hours | — |

| Daily | Run time (HH:MM, agent-local) |

| Weekly | Day of week + run time |

| Monthly | Day of month (1–31, clamped) + run time |

FIFO retention — specify how many snapshots to keep (1–90). Older snapshots are rotated out automatically by the cleanup cron. A stalled-backup detector runs every 15 minutes and alerts you when scheduled backups miss their window by more than the configured grace period.

Bring your own bucket. No vendor lock-in. No per-GB surcharges from ManageLM. No data residency questions. The bucket is yours, the keys are yours, the restore path is yours.

Encrypted before it leaves your server.

Configure S3 once, then schedule your first backup. The hardest part is picking a bucket name.